Interactive Financial Analytics with Python & IPython¶

Eurex Tutorial with Examples based on the VSTOXX Volatility Index

Dr. Yves J. Hilpisch

The Python Quants GmbH

PyData London – 21. February 2014

You find the presentation and the IPython Notebook here:

About Me¶

A brief bio:

- Managing Partner of The Python Quants

- Founder of Visixion GmbH – The Python Quants

- Lecturer Mathematical Finance at Saarland University

- Focus on Financial Industry and Financial Analytics

- Book (2013) "Derivatives Analytics with Python"

- Book (July 2014) "Python for Finance", O'Reilly

- Dr.rer.pol in Mathematical Finance

- Graduate in Business Administration

- Martial Arts Practitioner and Fan

See www.hilpisch.com.

Python for Analytics¶

Corporations, decision makers and analysts nowadays generally face a number of problems with data:

- sources: data typically comes from different sources, like from the Web, from in-house databases or it is generated in-memory, e.g. for simulation purposes

- formats: data is generally available in different formats, like SQL databases/tables, Excel files, CSV files, arrays, proprietary formats

- structure: data typically comes differently structured, be it unstructured, simply indexed, hierarchically indexed, in table form, in matrix form, in multidimensional arrays

- completeness: real-world data is generally incomplete, i.e. there is missing data (e.g. along an index) or multiple series of data cannot be aligned correctly (e.g. two time series with different time indexes)

- conventions: for some types of data there a many “competing” conventions with regard to formatting, like for dates and time

- interpretation: some data sets contain information that can be easily and intelligently interpreted, like a time index, others not, like texts

- performance: reading, streamlining, aligning, analyzing – i.e. processing – (big) data sets might be slow

In addition to these data-oriented problems, there typically are organizational issues that have to be considered:

- departments: the majority of companies is organized in departments with different technologies, databases, etc., leading to “data silos”

- analytics skills: analytical and business skills in general are possessed by people working in line functions (e.g. production) or administrative functions (e.g. finance)

- technical skills: technical skills, like retrieving data from databases and visualizing them, are generally possessed by people in technology functions (e.g. development, systems operations)

This tutorial focuses on

- Python as a general purpose financial analytics environment

- interactive analytics examples

- prototyping-like Python usage

It does not address such important issues like

- architectural issues regarding hardware and software

- development processes, testing, documentation and production

- real world problem modeling

Some Python Fundamentals¶

Fundamental Python Libraries¶

A fundamental Python stack for interactive data analytics and visualization should at least contain the following libraries tools:

- Python – the Python interpreter itself

- NumPy – high performance, flexible array structures and operations

- SciPy – collection of scientific modules and functions (e.g. for regression, optimization, integration)

- pandas – time series and panel data analysis and I/O

- PyTables – hierarchical, high performance database (e.g. for out-of-memory analytics)

- matplotlib – 2d and 3d visualization

- IPython – interactive data analytics, visualization, publishing

It is best to use either the Python distribution Anaconda or the Web-based analytics environment Wakari. Both provide almost "complete" Python environments.

For example, pandas can, among others, help with the following data-related problems:

- sources: pandas reads data directly from different data sources such as SQL databases, Excel files or JSON based APIs

- formats: pandas can process input data in different formats like CSV files or Excel files; it can also generate output in different formats like CSV, XLS, HTML or JSON

- structure: pandas' strength lies in structured data formats, like time series and panel data

- completeness: pandas automatically deals with missing data in most circumstances, e.g. computing sums even if there are a few or many “not a number”, i.e. missing, values

- conventions/interpretation: for example, pandas can interpret and convert different date-time formats to Python datetime objects and/or timestamps

- performance: the majority of pandas classes, methods and functions is implemented in a performance-oriented fashion making heavy use of the Python/C compiler Cython

First Interactive Steps with Python¶

As a simple example let's generate a NumPy array with five sets of 1000 (pseudo-)random numbers each.

import numpy as np # this imports the NumPy library

data = np.random.standard_normal((5, 1000)) # generate 5 sets with 1000 rn each

data[:, :5].round(3) # print first five values of each set rounded to 3 digits

array([[ 1.304, 0.641, -0.758, 0.792, 1.963],

[ 0.955, -0.482, 1.146, -0.076, 0.013],

[-1.67 , 0.814, -0.172, -0.57 , -1.921],

[-0.813, 1.569, -0.019, -0.658, -1.259],

[-1.777, -2.025, -0.32 , -0.684, 0.157]])

Let's plot a histogram of the 1st, 2nd and 3rd data set.

import matplotlib as mpl # this imports matplotlib

import matplotlib.pyplot as plt # this imports matplotlib.pyplot

%matplotlib inline

# inline plotting

plt.hist([data[0], data[1], data[2]], label=['Set 0', 'Set 1', 'Set 2'])

plt.grid(True) # grid for better readability

plt.legend()

<matplotlib.legend.Legend at 0x105baab50>

We then want to plot the 'running' cumulative sum of each set.

plt.figure() # initialize figure object

plt.grid(True)

for data_set in enumerate(data): # iterate over all rows

plt.plot(data_set[1].cumsum(), label='Set %s' % data_set[0])

# plot the running cumulative sums for each row

plt.legend(loc=0) # write legend with labels

<matplotlib.legend.Legend at 0x105eabb50>

Some fundamental statistics from our data sets.

data.mean(axis=1) # average value of the 5 sets

array([-0.04422916, -0.08201617, 0.01026341, -0.0120168 , -0.01186359])

data.std(axis=1) # standard deviation of the 5 sets

array([ 1.00640752, 1.00715935, 1.01333978, 1.00522446, 0.99632747])

np.corrcoef(data).round(3) # correltion matrix of the 5 data sets

array([[ 1. , -0.029, -0.105, 0.001, 0.009],

[-0.029, 1. , 0.02 , -0.024, 0.03 ],

[-0.105, 0.02 , 1. , -0.005, -0.003],

[ 0.001, -0.024, -0.005, 1. , -0.013],

[ 0.009, 0.03 , -0.003, -0.013, 1. ]])

First Financial Analytics Example¶

We need to make a couple of imports for what is to come.

import pandas as pd

import pandas.io.data as pdd

from urllib import urlretrieve

The convenience function DataReader makes it easy to read historical stock price data from Yahoo! Finance (http://finance.yahoo.com).

index = pdd.DataReader('^GDAXI', data_source='yahoo', start='2007/3/30')

# e.g. the EURO STOXX 50 ticker symbol -- ^SX5E

index.info()

<class 'pandas.core.frame.DataFrame'> DatetimeIndex: 1761 entries, 2007-03-30 00:00:00 to 2014-02-19 00:00:00 Data columns (total 6 columns): Open 1761 non-null float64 High 1761 non-null float64 Low 1761 non-null float64 Close 1761 non-null float64 Volume 1761 non-null int64 Adj Close 1761 non-null float64 dtypes: float64(5), int64(1)

pandas strength is the handling of indexed/labeled/structured data, like times series data.

index.tail()

| Open | High | Low | Close | Volume | Adj Close | |

|---|---|---|---|---|---|---|

| Date | ||||||

| 2014-02-13 | 9522.66 | 9600.64 | 9479.86 | 9596.77 | 93209100 | 9596.77 |

| 2014-02-14 | 9615.52 | 9677.53 | 9593.37 | 9662.40 | 91504800 | 9662.40 |

| 2014-02-17 | 9661.80 | 9682.19 | 9645.51 | 9656.76 | 45850100 | 9656.76 |

| 2014-02-18 | 9674.80 | 9690.97 | 9614.40 | 9659.78 | 58827300 | 9659.78 |

| 2014-02-19 | 9641.45 | 9695.86 | 9596.42 | 9660.05 | 72892900 | 9660.05 |

5 rows × 6 columns

pandas makes it easy to implement vectorized operations, like calculating log-returns over whole time series.

index['Returns'] = np.log(index['Close'] / index['Close'].shift(1))

In addition, pandas makes plotting quite simple and compact.

index[['Close', 'Returns']].plot(subplots=True, style='b', figsize=(8, 5))

array([<matplotlib.axes.AxesSubplot object at 0x1077eb950>,

<matplotlib.axes.AxesSubplot object at 0x107802bd0>], dtype=object)

We now want to check how annual volatility changes over time.

index['Mov_Vol'] = pd.rolling_std(index['Returns'], window=252) * np.sqrt(252)

Obviously, the annual volatility changes significantly over time.

index[['Close', 'Returns', 'Mov_Vol']].plot(subplots=True, style='b', figsize=(8, 5))

array([<matplotlib.axes.AxesSubplot object at 0x1079804d0>,

<matplotlib.axes.AxesSubplot object at 0x1079a6f50>,

<matplotlib.axes.AxesSubplot object at 0x108352ad0>], dtype=object)

Exercise¶

Trend-based investment strategy with the EURO STOXX 50 index:

- 2 trends 42d & 252d

- long, short, cash positions

- no transaction costs

Signal generation:

- invest (go long) when the 42d trend is more than 100 points above the 252d trend

- sell (go short) when the 42d trend is more than 20 points below the 252d trend

- invest in cash (no interest) when neither of both is true

Historical Correlation between EURO STOXX 50 and VSTOXX¶

It is a stylized fact that stock indexes and related volatility indexes are highly negatively correlated. The following example analyzes this stylized fact based on the EURO STOXX 50 stock index and the VSTOXX volatility index using Ordinary Least-Squares regession (OLS).

First, we collect historical data for both the EURO STOXX 50 stock and the VSTOXX volatility index.

import pandas as pd

import datetime as dt

from urllib import urlretrieve

es_url = 'http://www.stoxx.com/download/historical_values/hbrbcpe.txt'

vs_url = 'http://www.stoxx.com/download/historical_values/h_vstoxx.txt'

urlretrieve(es_url, 'es.txt')

urlretrieve(vs_url, 'vs.txt')

('vs.txt', <httplib.HTTPMessage instance at 0x108360638>)

The EURO STOXX 50 data is not yet in the right format. Some house cleaning is necessary (I).

lines = open('es.txt').readlines() # reads the whole file line-by-line

lines[:5] # header not well formatted

['Price Indices - EURO Currency\n', 'Date ;Blue-Chip;Blue-Chip;Broad ; Broad ;Ex UK ;Ex Euro Zone;Blue-Chip; Broad\n', ' ; Europe ;Euro-Zone;Europe ;Euro-Zone; ; ; Nordic ; Nordic\n', ' ; SX5P ; SX5E ;SXXP ;SXXE ; SXXF ; SXXA ; DK5F ; DKXF\n', '31.12.1986;775.00 ; 900.82 ; 82.76 ; 98.58 ; 98.06 ; 69.06 ; 645.26 ; 65.56\n']

The EURO STOXX 50 data is not yet in the right format. Some house cleaning is necessary (II).

lines[3883:3890] # from 27.12.2001 additional semi-colon

['20.12.2001;3537.34; 3617.47; 286.07; 300.97; 317.10; 267.23; 5268.36 ; 363.19\n', '21.12.2001;3616.80; 3696.44; 291.39; 306.60; 322.55; 272.18; 5360.52 ; 370.94\n', '24.12.2001;3622.85; 3696.98; 291.90; 306.77; 322.69; 272.95; 5360.52 ; 370.94\n', '27.12.2001;3686.23; 3778.39; 297.11; 312.43; 327.57; 277.68; 5479.59; 378.69;\n', '28.12.2001;3706.93; 3806.13; 298.73; 314.52; 329.94; 278.87; 5585.35; 386.99;\n', '02.01.2002;3627.81; 3755.56; 293.69; 311.43; 326.77; 272.38; 5522.25; 380.09;\n', '03.01.2002;3699.09; 3833.09; 299.09; 317.54; 332.62; 277.08; 5722.57; 396.12;\n']

The EURO STOXX 50 data is not yet in the right format. Some house cleaning is necessary (III).

lines = open('es.txt').readlines() # reads the whole file line-by-line

new_file = open('es50.txt', 'w') # opens a new file

new_file.writelines('date' + lines[3][:-1].replace(' ', '') + ';DEL' + lines[3][-1])

# writes the corrected third line (additional column name)

# of the orginal file as first line of new file

new_file.writelines(lines[4:-1]) # writes the remaining lines of the orginal file

The EURO STOXX 50 data is not yet in the right format. Some house cleaning is necessary (IV).

list(open('es50.txt'))[:5] # opens the new file for inspection

['date;SX5P;SX5E;SXXP;SXXE;SXXF;SXXA;DK5F;DKXF;DEL\n', '31.12.1986;775.00 ; 900.82 ; 82.76 ; 98.58 ; 98.06 ; 69.06 ; 645.26 ; 65.56\n', '01.01.1987;775.00 ; 900.82 ; 82.76 ; 98.58 ; 98.06 ; 69.06 ; 645.26 ; 65.56\n', '02.01.1987;770.89 ; 891.78 ; 82.57 ; 97.80 ; 97.43 ; 69.37 ; 647.62 ; 65.81\n', '05.01.1987;771.89 ; 898.33 ; 82.82 ; 98.60 ; 98.19 ; 69.16 ; 649.94 ; 65.82\n']

Now, the data can be safely read into a DataFrame object.

es = pd.read_csv('es50.txt', index_col=0, parse_dates=True, sep=';', dayfirst=True)

del es['DEL'] # delete the helper column

es.info()

<class 'pandas.core.frame.DataFrame'> DatetimeIndex: 6997 entries, 1986-12-31 00:00:00 to 2014-02-18 00:00:00 Data columns (total 8 columns): SX5P 6997 non-null float64 SX5E 6997 non-null float64 SXXP 6997 non-null float64 SXXE 6997 non-null object SXXF 6996 non-null float64 SXXA 6996 non-null float64 DK5F 6996 non-null float64 DKXF 6996 non-null float64 dtypes: float64(7), object(1)

The VSTOXX data can be read without touching the raw data.

vs = pd.read_csv('vs.txt', index_col=0, header=2, parse_dates=True, sep=',', dayfirst=True)

# you can alternatively read from the Web source directly

# without saving the csv file to disk:

# vs = pd.read_csv(vs_url, index_col=0, header=2,

# parse_dates=True, sep=',', dayfirst=True)

We now merge the data for further analysis.

import datetime as dt

data = pd.DataFrame({'EUROSTOXX' :

es['SX5E'][es.index > dt.datetime(1999, 12, 31)]})

data = data.join(pd.DataFrame({'VSTOXX' :

vs['V2TX'][vs.index > dt.datetime(1999, 12, 31)]}))

data.info()

<class 'pandas.core.frame.DataFrame'> DatetimeIndex: 3622 entries, 2000-01-03 00:00:00 to 2014-02-18 00:00:00 Data columns (total 2 columns): EUROSTOXX 3622 non-null float64 VSTOXX 3600 non-null float64 dtypes: float64(2)

Let's inspect the two time series.

data.head()

| EUROSTOXX | VSTOXX | |

|---|---|---|

| date | ||

| 2000-01-03 | 4849.22 | 30.9845 |

| 2000-01-04 | 4657.83 | 33.2225 |

| 2000-01-05 | 4541.75 | 32.5944 |

| 2000-01-06 | 4500.69 | 31.1811 |

| 2000-01-07 | 4648.27 | 27.4407 |

5 rows × 2 columns

A picture can tell almost the complete story.

data.plot(subplots=True, grid=True, style='b', figsize=(10, 5))

array([<matplotlib.axes.AxesSubplot object at 0x108468c90>,

<matplotlib.axes.AxesSubplot object at 0x1078ce090>], dtype=object)

We now generate log returns for both time series.

rets = np.log(data / data.shift(1))

rets.head()

| EUROSTOXX | VSTOXX | |

|---|---|---|

| date | ||

| 2000-01-03 | NaN | NaN |

| 2000-01-04 | -0.040268 | 0.069740 |

| 2000-01-05 | -0.025237 | -0.019087 |

| 2000-01-06 | -0.009082 | -0.044328 |

| 2000-01-07 | 0.032264 | -0.127785 |

5 rows × 2 columns

To this new data set, also stored in a DataFrame object, we apply OLS.

xdat = rets['EUROSTOXX']

ydat = rets['VSTOXX']

model = pd.ols(y=ydat, x=xdat)

model

-------------------------Summary of Regression Analysis-------------------------

Formula: Y ~ <x> + <intercept>

Number of Observations: 3577

Number of Degrees of Freedom: 2

R-squared: 0.5544

Adj R-squared: 0.5543

Rmse: 0.0379

F-stat (1, 3575): 4447.8744, p-value: 0.0000

Degrees of Freedom: model 1, resid 3575

-----------------------Summary of Estimated Coefficients------------------------

Variable Coef Std Err t-stat p-value CI 2.5% CI 97.5%

--------------------------------------------------------------------------------

x -2.7183 0.0408 -66.69 0.0000 -2.7982 -2.6384

intercept -0.0007 0.0006 -1.10 0.2704 -0.0019 0.0005

---------------------------------End of Summary---------------------------------

Again, we want to see how our results look graphically.

plt.plot(xdat, ydat, 'r.')

ax = plt.axis() # grab axis values

x = np.linspace(ax[0], ax[1] + 0.01)

plt.plot(x, model.beta[1] + model.beta[0] * x, 'b', lw=2)

plt.grid(True)

plt.axis('tight')

(-0.10000000000000001, 0.16, -0.43562265909764758, 0.43687964474802654)

Let us see if we can identify systematics over time. And indeed, during the crisis 2007/2008 (yellow dots) volatility has been more pronounced than more recently (red dots).

mpl_dates = mpl.dates.date2num(rets.index)

plt.figure(figsize=(8, 4))

plt.scatter(rets['EUROSTOXX'], rets['VSTOXX'], c=mpl_dates, marker='o')

plt.grid(True)

plt.xlabel('EUROSTOXX')

plt.ylabel('VSTOXX')

plt.colorbar(ticks=mpl.dates.DayLocator(interval=250),

format=mpl.dates.DateFormatter('%d %b %y'))

<matplotlib.colorbar.Colorbar instance at 0x10afb5bd8>

Exercise¶

We want to test whether the EURO STOXX 50 and/or the VSTOXX returns are normally distributed or not (e.g. if they might have fat tails). We want to do a

- graphical illustration (using qqplot of statsmodels.api) and a

- statistical test (using normaltest of scipy.stats)

Add on: plot a histogram of the log return frequencies and compare that to a normal distribution with same mean and variance (using e.g. norm.pdf from scipy.stats)

Constant Proportion VSTOXX Investment¶

There has been a number of studies which have illustrated that constant proportion investments in volatility derivatives – given a diversified equity portfolio – might improve investment performance considerably. See, for instance, the study

The Benefits of Volatility Derivatives in Equity Portfolio Management

We now want to replicate (in a simplified fashion) what you can flexibly test here on the basis of two backtesting applications for VSTOXX-based investment strategies:

The strategy we are going to implement and test is characterized as follows:

- An investor has total wealth of say 100,000 EUR

- He invests, say, 70% of that into a diversified equity portfolio

- The remainder, i.e. 30%, is invested in the VSTOXX index directly

- Through (daily) trading the investor keeps the proportions constant

- No transaction costs apply, all assets are infinitely divisible

We already have the necessary data available. However, we want to drop 'NaN' values and want to normalize the index values.

data = data.dropna()

data = data / data.ix[0] * 100

data.head()

| EUROSTOXX | VSTOXX | |

|---|---|---|

| date | ||

| 2000-01-03 | 100.000000 | 100.000000 |

| 2000-01-04 | 96.053180 | 107.222966 |

| 2000-01-05 | 93.659393 | 105.195824 |

| 2000-01-06 | 92.812659 | 100.634511 |

| 2000-01-07 | 95.856035 | 88.562668 |

5 rows × 2 columns

First, the initial invest.

invest = 100

cratio = 0.3

data['Equity'] = (1 - cratio) * invest / data['EUROSTOXX'][0]

data['Volatility'] = cratio * invest / data['VSTOXX'][0]

This can already be considered an static investment strategy.

data['Static'] = (data['Equity'] * data['EUROSTOXX']

+ data['Volatility'] * data['VSTOXX'])

data[['EUROSTOXX', 'Static']].plot(figsize=(10, 5))

<matplotlib.axes.AxesSubplot at 0x108981990>

Second, the dynamic strategy with daily adjustments to keep the value ratio constant.

for i in range(1, len(data)):

evalue = data['Equity'][i - 1] * data['EUROSTOXX'][i]

# value of equity position

vvalue = data['Volatility'][i - 1] * data['VSTOXX'][i]

# value of volatility position

tvalue = evalue + vvalue

# total wealth

data['Equity'][i] = (1 - cratio) * tvalue / data['EUROSTOXX'][i]

# re-allocation of total wealth to equity ...

data['Volatility'][i] = cratio * tvalue / data['VSTOXX'][i]

# ... and volatility position

Third, the total wealth position.

data['Dynamic'] = (data['Equity'] * data['EUROSTOXX']

+ data['Volatility'] * data['VSTOXX'])

data.head()

| EUROSTOXX | VSTOXX | Equity | Volatility | Static | Dynamic | |

|---|---|---|---|---|---|---|

| date | ||||||

| 2000-01-03 | 100.000000 | 100.000000 | 0.700000 | 0.300000 | 100.000000 | 100.000000 |

| 2000-01-04 | 96.053180 | 107.222966 | 0.724420 | 0.278124 | 99.404116 | 99.404116 |

| 2000-01-05 | 93.659393 | 105.195824 | 0.725761 | 0.276930 | 97.120322 | 97.106211 |

| 2000-01-06 | 92.812659 | 100.634511 | 0.718221 | 0.283884 | 95.159214 | 95.228521 |

| 2000-01-07 | 95.856035 | 88.562668 | 0.686354 | 0.318376 | 93.668025 | 93.987330 |

5 rows × 6 columns

A brief check if the ratios are indeed constant.

(data['Volatility'] * data['VSTOXX'] / data['Dynamic'])[:5]

date 2000-01-03 0.3 2000-01-04 0.3 2000-01-05 0.3 2000-01-06 0.3 2000-01-07 0.3 dtype: float64

(data['Equity'] * data['EUROSTOXX'] / data['Dynamic'])[:5]

date 2000-01-03 0.7 2000-01-04 0.7 2000-01-05 0.7 2000-01-06 0.7 2000-01-07 0.7 dtype: float64

Let us inspect the performance of the strategy.

data[['EUROSTOXX', 'Dynamic']].plot(figsize=(10, 5))

<matplotlib.axes.AxesSubplot at 0x10af45f10>

Exercise¶

Write a Python function which allows for an arbitrary but constant ratio to be invested in the VSTOXX index and which returns net performance values (in percent) for the constant proportion VSTOXX strategy.

Add on: find the ratio to be invested in the VSTOXX that gives the maximum performance.

Analyzing High Frequency Data¶

Using standard Python functionality and pandas, the code that follows reads intraday, high-frequency data from a Web source, plots it and resamples it.

url = 'http://hopey.netfonds.no/posdump.php?'

url += 'date=%s%s%s&paper=AAPL.O&csv_format=csv' % ('2014', '02', '19')

# you may have to adjust the date since only recent dates are available

urlretrieve(url, 'aapl.csv')

('aapl.csv', <httplib.HTTPMessage instance at 0x10bc1a7e8>)

AAPL = pd.read_csv('aapl.csv', index_col=0, header=0, parse_dates=True)

AAPL.info()

<class 'pandas.core.frame.DataFrame'> DatetimeIndex: 14649 entries, 2014-02-19 01:17:18 to 2014-02-19 22:16:26 Data columns (total 6 columns): bid 14649 non-null float64 bid_depth 14649 non-null int64 bid_depth_total 14649 non-null int64 offer 14649 non-null float64 offer_depth 14649 non-null int64 offer_depth_total 14649 non-null int64 dtypes: float64(2), int64(4)

The intraday evolution of the Apple stock price.

AAPL['bid'].plot()

<matplotlib.axes.AxesSubplot at 0x10bd2d950>

AAPL = AAPL[AAPL.index > dt.datetime(2014, 2, 19, 10, 0, 0)]

# only data later than 10am at that day

A resampling of the data is easily accomplished with pandas.

# this resamples the record frequency to 5 minutes, using mean as aggregation rule

AAPL_5min = AAPL.resample(rule='5min', how='mean').fillna(method='ffill')

AAPL_5min.head()

| bid | bid_depth | bid_depth_total | offer | offer_depth | offer_depth_total | |

|---|---|---|---|---|---|---|

| time | ||||||

| 2014-02-19 10:00:00 | 545.050000 | 100.000000 | 100.000000 | 545.993333 | 241.666667 | 241.666667 |

| 2014-02-19 10:05:00 | 545.423462 | 142.307692 | 142.307692 | 546.255769 | 107.692308 | 107.692308 |

| 2014-02-19 10:10:00 | 545.600270 | 127.027027 | 127.027027 | 546.164324 | 100.000000 | 100.000000 |

| 2014-02-19 10:15:00 | 545.654528 | 183.018868 | 183.018868 | 546.149811 | 100.000000 | 100.000000 |

| 2014-02-19 10:20:00 | 545.777556 | 173.333333 | 173.333333 | 546.155778 | 100.000000 | 100.000000 |

5 rows × 6 columns

Let's have a graphical look at the new data set.

AAPL_5min['bid'].plot()

<matplotlib.axes.AxesSubplot at 0x10bc05390>

With pandas you can easily apply custom functions to time series data.

AAPL_5min['bid'].apply(lambda x: 2 * 540 - x).plot()

# this mirrors the stock price development at

<matplotlib.axes.AxesSubplot at 0x10ccc0490>

Why Python for Financial Analytics & Visualization?¶

10 years ago, Python was considered exotic in the analytics space – at best. Languages/packages like R and Matlab dominated the scene. Today, Python has become a major force in financial analytics & visualization due to a number of characteristics:

- multi-purpose: prototyping, development, production, sytems administration – Python is one for all

- libraries: there is a library for almost any task or problem you face

- efficiency: Python speeds up all IT development tasks for analytics applications and reduces maintenance costs

- performance: Python has evolved from a scripting language to a 'meta' language with bridges to all high performance environments (e.g. LLVM, multi-core CPUs, GPUs, clusters)

- interoperalbility: Python seamlessly integrates with almost any other language and technology

- interactivity: Python allows domain experts to get closer to their business and financial data pools and to do real-time analytics

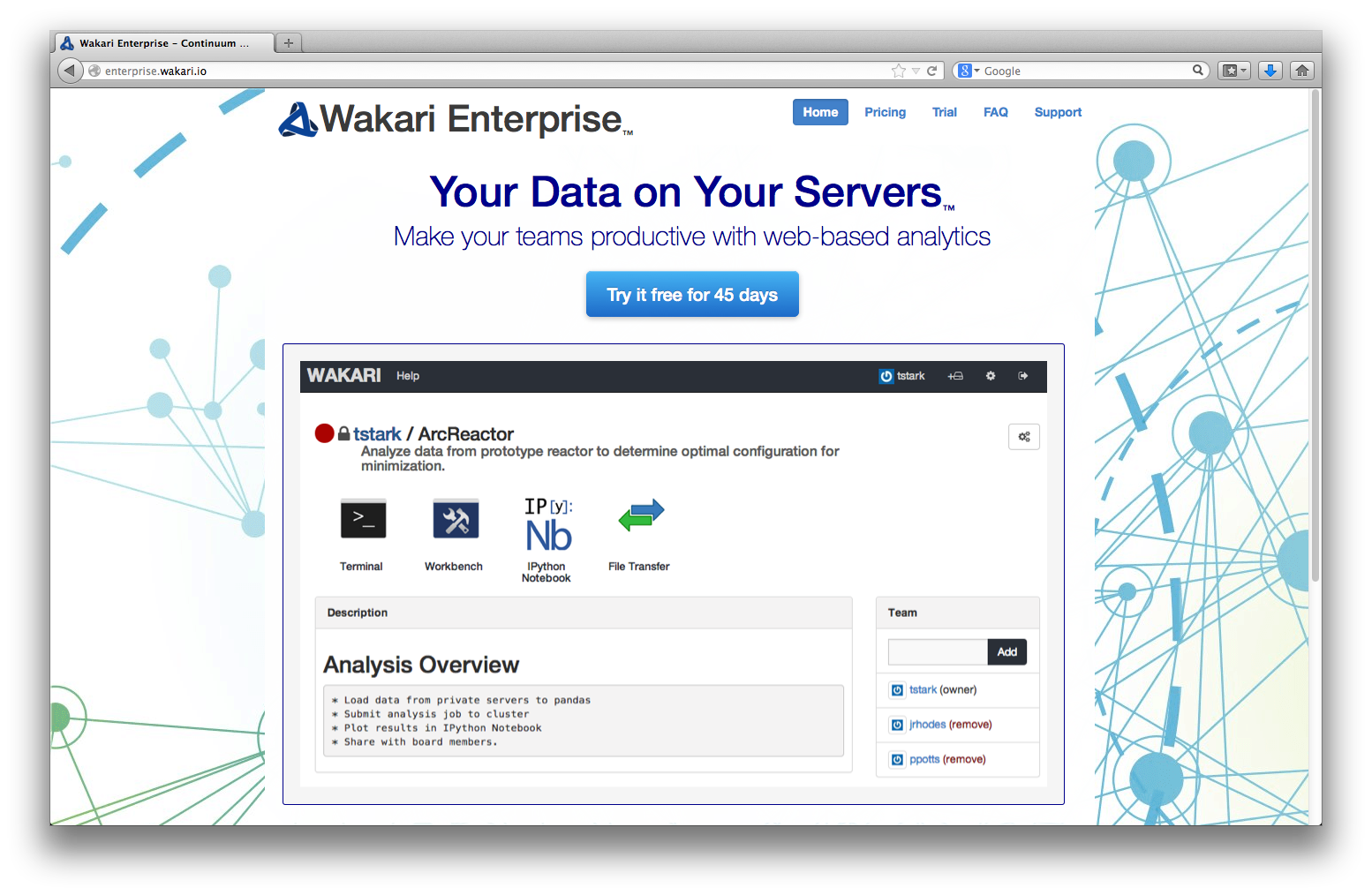

- collaboration: solutions like Wakari with IPython Notebook allow the easy sharing of code, data, results, graphics, etc.

One of the easiest ways to deploy Python today across a whole organization with a heterogenous IT infrastructure is via Wakari, Continuum's Web-/Browser- and Python-based Data Analytics environment. It is availble both as a cloud as well as an enterprise solution for in-house deployment.

The Python Quants GmbH – Python Data Exploration & Visualization¶

The Python Quants – the company Web site

Dr. Yves J. Hilpisch – my personal Web site

Derivatives Analytics with Python – my new book

Read an Excerpt and Order the Book

Contact Us

# Autosave Function for IPyton Notebook

def autosave(interval=3):

"""Autosave the notebook every interval (in minutes)"""

from IPython.core.display import Javascript

interval *= 60 * 1000 # JS wants intervals in miliseconds

tpl = 'setInterval ( "IPython.notebook.save_notebook()", %i );'

return Javascript(tpl % interval)

autosave()

<IPython.core.display.Javascript at 0x10cf93e90>